Every JD Edwards system that has been running for a few years carries an unanswered question: how many of the custom objects developed over time are still fundamentally identical to the Oracle standard from which they derive, and how many have evolved to the point of becoming something entirely different? This question becomes urgent when facing an upgrade, a migration or a customisation audit. In most cases, the answer does not exist — because no one has ever sought it systematically. In this article, I describe how I approach this problem in my work, and the proprietary tool I developed to solve it.

What is JD Edwards and why does this problem exist?

JD Edwards (commonly known as JDE) is an enterprise ERP developed by Oracle, widely used in manufacturing, distribution, and in the oil and gas and construction sectors. Like all high-end ERP systems, JDE offers a set of "standard" objects — applications, reports, business functions and business views — that cover fundamental business processes.

The operational reality, however, is that no company runs pure JDE. Every implementation brings with it dozens, if not hundreds, of customisations: standard objects are copied, renamed with a custom prefix (typically in the 55–69 range according to JDE nomenclature), and modified to suit the client's specific processes.

This creates a very concrete problem: how can one know, years later, which standard lies at the base of a custom object? Traceability is lost. Developers move on. Documentation ages or never existed in the first place.

The technical problem: finding the origin of a custom object

In my JDE consultancy work, I frequently need to analyse entire repositories of custom objects — sometimes hundreds of files — to answer questions such as:

- Is this P55001 a copy of P4210? And how far has it drifted?

- Is this Business Function still fundamentally identical to the standard, or has it been rewritten?

- Which objects have been substantially modified and which still correspond to the original?

The problem is non-trivial for several reasons.

The content is noisy. JDE exports contain a large amount of boilerplate: standard comments, copyright headers, repetitive structure declarations. If one compares raw text, the noise overwhelms the signal.

Names are not enough. A P554210 most likely derives from P4210, but a P55001 could derive from P4101, P4201 or from an entirely different object. The naming convention is not always followed, and in some cases the custom prefix bears no logical relationship to the original.

Scale makes manual comparison impractical. With 200 standards and 400 custom objects, a manual comparison generates 80,000 possible combinations. This is not a human-scale operation.

The approach: a hybrid analysis engine

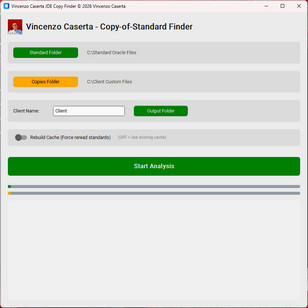

To solve this problem, I developed COS-Analysis, a proprietary desktop tool that approaches the comparison at three distinct levels, combined into a final weighted score.

Level 1 — Content cleaning and normalisation

Before any comparison, each file is subjected to a preprocessing phase:

- Removal of fixed boilerplate (copyright headers, JDE-specific repetitive structure declarations)

- Removal of layout noise (positions, dimensions, UI identifiers)

- Replacement of the specific object name with a generic placeholder, in order to neutralise naming differences during textual comparison

This step is critical: without normalisation, two objects identical in behaviour but with different copyright headers would appear dissimilar, and two different objects with the same name might appear artificially similar.

Level 2 — Textual content comparison via WinnowingA document fingerprinting algorithm: it represents each text as a set of hashes extracted from sliding windows of character sequences. Originally developed for academic plagiarism detection, it is robust against partial modifications to text.

The core of the textual comparison is based on the WinnowingA document fingerprinting algorithm: it represents each text as a set of hashes extracted from sliding windows of character sequences. Originally developed for academic plagiarism detection, it is robust against partial modifications to text. algorithm, a document fingerprinting technique originally developed for academic plagiarism detection. The idea is to represent each document as a set of k-gram fingerprintsA k-gram is a contiguous sequence of k characters (or tokens) extracted from a text. For example, with k=4, the word "HELLO" generates the k-grams: HELL, ELLO. K-gram fingerprinting allows texts to be compared even when they have been partially modified., extracted via a sliding window.

This approach has a fundamental advantage over classical TF-IDFTerm Frequency–Inverse Document Frequency: a statistical technique that measures how relevant a word is in a document relative to a collection. Very frequent words receive a low weight; rare, specific words receive a high weight. (which I use as a fallback): it is robust against partial transformations. If an object has been modified only in certain sections, Winnowing still detects the structural overlap in the unchanged parts, producing a significant similarity score even in the presence of substantial modifications.

The most computationally intensive part of Winnowing is implemented via a native module compiled in Rust, integrated into Python. This significantly reduces analysis times on large repositories compared to a purely Python implementation.

Level 3 — Structural fingerprintA "digital fingerprint" of the JDE object: instead of comparing the full text, a reduced set of semantically significant tokens (forms, tables, views, joins) is extracted, representing the logical structure of the object independently of the code. via JaccardThe Jaccard index measures the similarity between two sets: it is the ratio of common elements (intersection) to the total number of distinct elements (union). Value 1 = identical sets, value 0 = no elements in common.

Textual comparison alone is not sufficient. Two JDE objects can have similar textual content but a completely different structure. For this reason, I added a second layer based on the concept of structural fingerprintA "digital fingerprint" of the JDE object: instead of comparing the full text, a reduced set of semantically significant tokens (forms, tables, views, joins) is extracted, representing the logical structure of the object independently of the code.: a set of semantically relevant tokens extracted from the file.

For each JDE object type, the token set is different:

- For APPL: Form ID and associated Business View

- For UBE: View and Report Interconnect

- For BSVW: tables and join definitions

- For BSFN: name of the internal function and associated DSTR

The similarity between the two token sets is calculated via the Jaccard indexThe Jaccard index measures the similarity between two sets: it is the ratio of common elements (intersection) to the total number of distinct elements (union). Value 1 = identical sets, value 0 = no elements in common. (ratio of intersection to union), which measures how much the two objects share the same "anatomy", regardless of how the code is written.

Level 4 — Meta-logic on the normalised name

The fourth component is simpler but has an important impact on accuracy. Each custom object is analysed to identify its "theoretical parent": by removing the custom numeric prefix from the name (e.g. P55 → P, R564 → R), one obtains the name of the standard object from which it most likely derives.

If this match exists in the standards repository, the score of the corresponding candidate receives a weighted bonus. This mechanism drastically reduces false positives in cases where the naming convention has been followed.

The final score and classification

The three components — Winnowing, structural Jaccard and meta-logic — are combined with defined weights to produce a final similarity score between 0 and 1. The result is then classified into four categories:

| Threshold | Classification |

|---|---|

| ≥ 0.95 | Identical copy / Exact Copy |

| ≥ 0.75 | Copy with minor modifications |

| ≥ 0.50 | Copy with substantial modifications |

| < 0.50 | New object |

For each match, the tool automatically generates the comparison command for Beyond Compare (a diff tool widely used in the JDE ecosystem), allowing the analyst to immediately open the visual diff between the custom object and its standard reference with a single click.

Output and practical use

At the end of the analysis, COS-Analysis produces an Excel report with all results, sorted by object type and descending similarity score. Each row includes the name of the analysed object, the best standard reference match found, the similarity percentage, the classification and the Beyond Compare command ready for immediate use.

In my day-to-day practice, this report becomes the starting point for upgrade assessment, gap analysis and customisation documentation activities. In contexts where a migration to a new JDE release is being planned, knowing precisely which custom objects are still close to the original and which have drifted away means planning re-engineering activities in an informed manner, rather than relying on intuition.

Conclusions

The problem of identifying copies of JDE standards is a real, recurring and frequently underestimated challenge in project planning. The multi-level hybrid approach I have implemented in COS-Analysis — combining semantic preprocessing, Winnowing with a Rust engine, structural Jaccard similarity and meta-logic on naming — delivers accurate results even on large repositories, in timeframes that make the analysis practicable.

If you manage a JDE system and are facing these challenges, I am available to discuss them.