My Blog

- Details

- By Vincenzo Caserta

- Category: My Blog

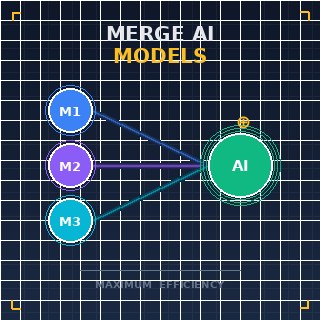

In a high-tech laboratory in Zurich, a data scientist stares at a terminal screen where two distinct neural spirits are about to become one. One model is a master of molecular biology, while the other is an expert in fluid dynamics; separately, they are brilliant, but together, they could revolutionize drug delivery systems. This is the reality of 2026, where the primary challenge is no longer just building bigger systems, but figuring out how to merge multiple specialized Large Language Models (LLMs)Artificial intelligence systems trained on massive amounts of text to understand and generate human-like language. into a single, cohesive intelligence. This process, known as model merging, has moved from an experimental curiosity to a critical engineering standard, allowing developers to synthesize the strengths of diverse architectures without the prohibitive costs of retraining from scratch.

- Details

- By Vincenzo Caserta

- Category: My Blog

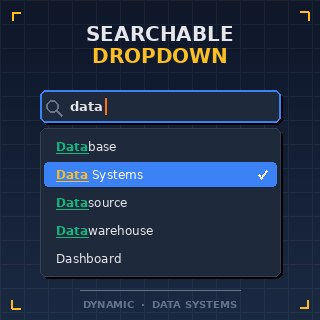

Imagine a genomic researcher in 2026 attempting to isolate a single protein variant from a library of fifty thousand entries using a standard HTML select element. The browser freezes, the scrollbar becomes microscopic, and the user experience collapses under the weight of excessive DOMThe Document Object Model is a programming interface for web documents, representing the page so that programs can change the structure and content. nodes. To solve this, we must move beyond static elements and embrace programmable components. Learning how to create a dynamic searchable dropdown is no longer just a UI/UXUser Interface and User Experience design focused on the aesthetic and functional aspects of how humans interact with software. preference; it is a technical necessity for high-performance data management and scientific visualization platforms.

- Details

- By Vincenzo Caserta

- Category: My Blog

Why do our most advanced predictive models, despite being trained on the most expensive hardware available in 2026, still crumble when faced with a minor change in environmental context? This failure isn't a software bug, but a mathematical inevitability: the inability of current architectures to successfully migrate JD (Joint Distributions) from a source domain to a target domain. As we push the boundaries of autonomous systems and real-time scientific modeling, the industry has finally recognized that data is not a static resource. To maintain accuracy, we must treat data as a fluid entity that requires sophisticated translocation strategies. Understanding how to migrate JD is no longer an academic exercise; it is the cornerstone of robust artificial intelligence.

- Details

- By Vincenzo Caserta

- Category: My Blog

Recent telemetry data from 2026 suggests that the average enterprise web server now generates upwards of 1.2 terabytes of log data monthly, a volume that renders manual inspection not just inefficient, but mathematically impossible for human operators. As we navigate an era where microservicesAn architectural style that structures an application as a collection of small, autonomous services modeled around a business domain. dominate the landscape, the humble Nginx log has evolved from a simple text file into a critical stream of high-velocity data. Yet, many administrators remain tethered to archaic, manual workflows that fail to capture the true pulse of their infrastructure. Understanding how to automate Nginx log management is no longer a luxury; it is a fundamental requirement for maintaining system integrity and performance in a hyper-connected world.

More Articles …

Page 1 of 18